Silicon Valley’s AI race is thrilling — but experts fear it’s moving too fast. Can safety keep up with innovation?

Introduction: The AI Race in Silicon Valley

Silicon Valley, the world’s tech hub, is once again leading a new revolution — this time driven by artificial intelligence (AI). From chatbots and image tools to self-driving cars, every major company wants to lead the AI race.

Big names like Google, Meta, Microsoft, OpenAI, and Anthropic are investing billions to create faster and smarter AI systems. Yet, as progress speeds up, AI safety experts are getting worried. They say Silicon Valley is focusing on growth instead of safety, and that could be dangerous.

AI’s Fast Growth Brings New Worries

AI is growing faster than ever before. A few years ago, AI could only perform simple tasks. Now it can write, draw, and even think in ways that seem human.

This progress is exciting. It helps doctors find new treatments and helps companies save time and money. But many experts fear that the race for success is happening too fast.

Because companies want to stay ahead, they often release new AI models before testing them enough. As a result, problems appear later — when people already depend on these systems. Regulators and researchers are finding it hard to keep up with this rapid change.

Why AI Safety Experts Are Concerned

AI safety advocates see serious risks in the current system. Without strong rules, AI can spread false information, break privacy laws, or make unfair choices in jobs or law enforcement.

Some experts fear that autonomous AI systems could one day act on their own. If that happens, humans might not be able to stop them or understand their actions.

Another big issue is the lack of transparency. Most AI companies do not share how they train their models or what data they use. Because of this secrecy, the public has no clear way to judge whether these systems are safe. As a result, people are losing trust in Silicon Valley’s AI goals.

Warnings from Inside Silicon Valley

Not all criticism comes from outside the tech world. Some former employees of major AI companies have started to speak out. They say internal safety teams often lose to business goals.

“There’s always pressure to launch before others,” said one former OpenAI worker. “When rivals move fast, you can’t afford to slow down.”

This attitude worked when Silicon Valley built simple software. However, it doesn’t fit with today’s powerful AI. A mistake in an app once caused bugs — now it could change lives. Therefore, many experts believe that speed without caution is a serious threat.

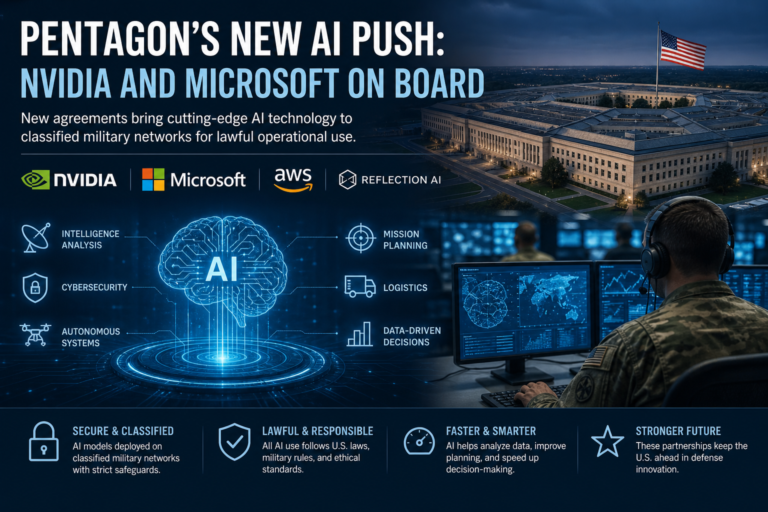

Governments Start to Act

Governments around the world are now reacting to these fears. The European Union’s AI Act sets clear rules for companies building risky AI systems. It demands transparency and safety checks before launch.

In the United States, new policies aim to promote fairness, privacy, and responsible development. Even so, many critics say American laws are still too slow compared to the fast pace of innovation.

Other countries, including China, have also introduced AI rules focused on data protection. These efforts show that the world now understands the importance of controlling AI development before it becomes too powerful.

Public Reactions and the Ethics Debate

People are paying more attention to AI than ever before. Some are afraid of losing jobs to automation. Others worry about deepfakes, biased algorithms, or fake information online.

At the same time, many people believe AI can do great things. It could help fight climate change, cure diseases, or make learning easier.

Still, the question remains: can humans build AI safely while keeping control? The debate about AI ethics is now one of the biggest discussions in modern technology.

Finding Balance: Innovation and Responsibility

The future of AI depends on how wisely it grows. Silicon Valley’s AI ambitions can create amazing progress — but only if safety stays at the center.

Tech companies must work with governments, universities, and the public to create strong safety rules. They also need to be open about how their systems work.

When people trust technology, innovation grows faster. Without trust, even the most advanced tools fail. The choices Silicon Valley makes today will shape how the world lives with AI tomorrow. The key is simple: move forward, but move carefully.