Google DeepMind employees raise their voices over military AI concerns and ethical risks.

Introduction

In recent months, employees at Google DeepMind have started to speak out. At first, they shared concerns inside the company. However, the issue is now public. As a result, more people are paying attention.

AI Ethics and Military Concerns

Artificial intelligence can improve many areas of life. For example, it helps in healthcare and education. However, its use in the military raises concerns. Because of this, employees feel uneasy. They worry their work could support war or surveillance. Therefore, they want clear rules.

Growing Awareness Among Employees

Over time, employees have learned more about defense projects. As a result, many now question their roles. In addition, they want clear answers from leaders. This growing awareness is driving action.

Demand for Transparency and Accountability

Employees want honesty from company leaders. In other words, they want simple and clear answers. For example, they ask how AI is used. They also ask why decisions are made. Without clear answers, trust weakens. Therefore, workers push for accountability.

Influence of Tech Industry Protests

In the past, tech workers have spoken out. As a result, some companies changed their plans. Because of this, employees at Google DeepMind feel more confident. Now, they are raising their voices. In some cases, they are also exploring unions.

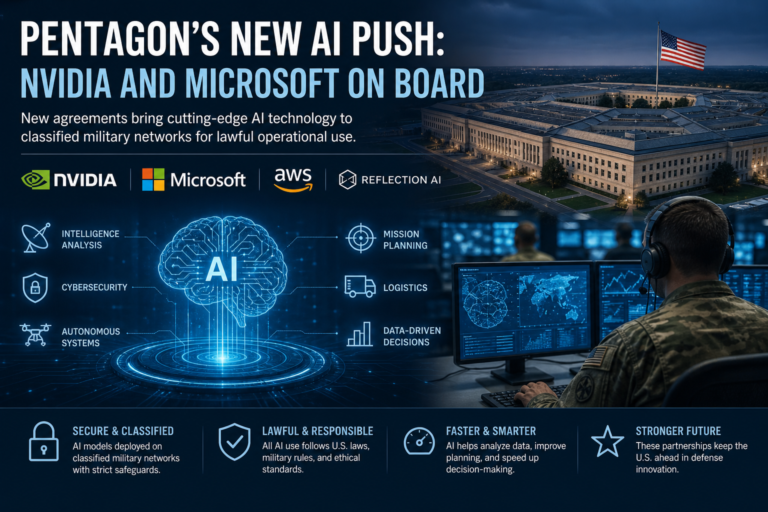

Long-Term Risks of Military AI

Many employees worry about the future. For instance, they fear a race to build AI weapons. This could increase global risk. Moreover, fast AI growth may outpace laws. Because of this, human control could drop. So, employees believe action is needed now.

Industry-Wide Shift in Responsibility

At the same time, this issue affects the whole tech industry. Workers are speaking up more than before. As a result, companies face pressure to act. Meanwhile, public awareness is also growing.

Conclusion

In conclusion, employees at Google DeepMind are speaking out for clear reasons. They want ethical AI use. They also want transparency and accountability. Ultimately, their actions could shape the future of AI.